The Astrocyte Transformer: Exploring the Connection Between Biology and AI

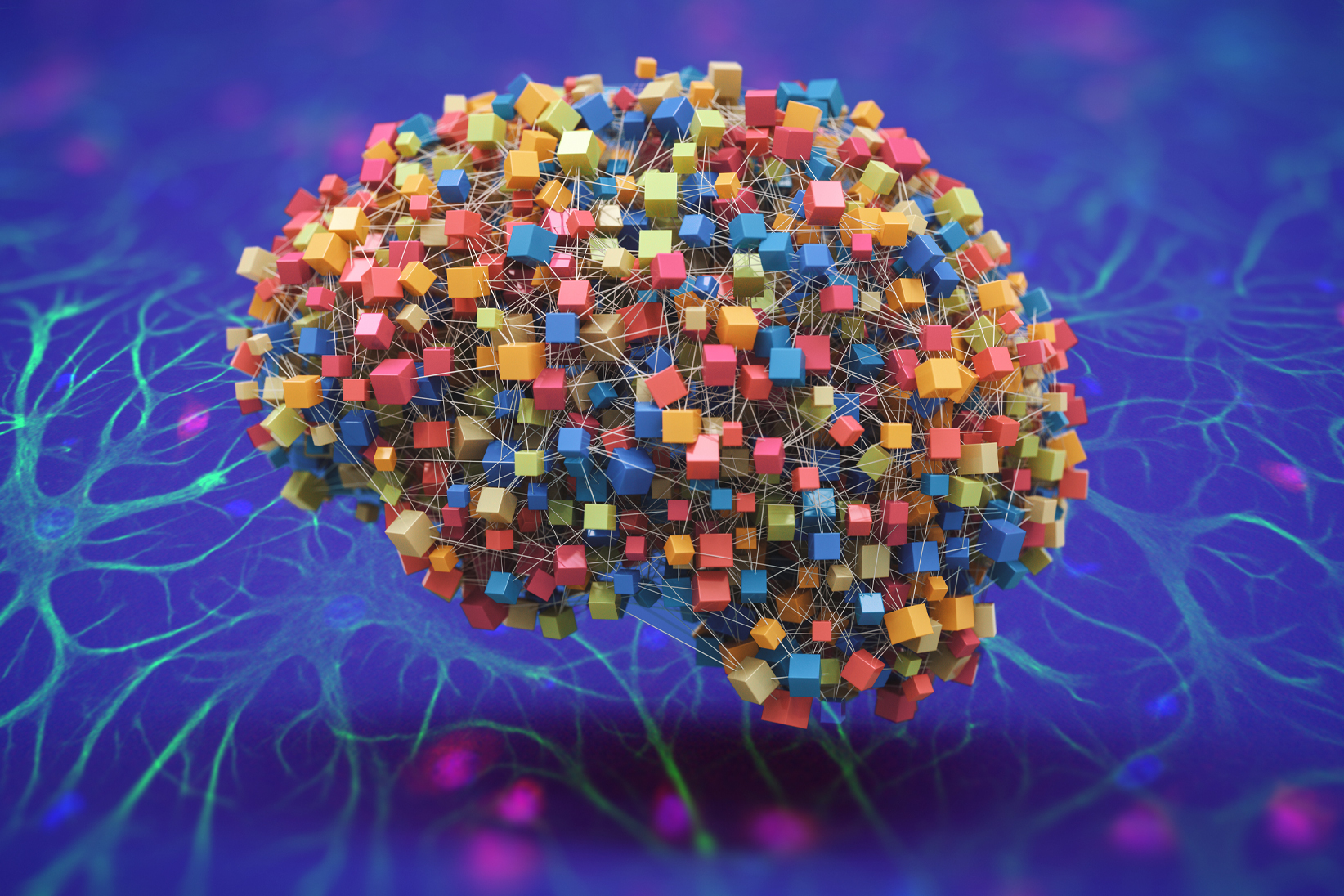

Artificial neural networks are machine-learning models inspired by the human brain. Scientists have recently developed a more powerful network called a transformer, which can generate text with near-human-like accuracy. However, the biological components of transformers have remained unclear. Now, researchers from MIT, the MIT-IBM Watson AI Lab, and Harvard Medical School have proposed a hypothesis that may explain how a transformer could be built using astrocytes, a type of brain cell.

Unveiling the Potential of Astrocytes

Astrocytes, non-neuronal cells in the brain, have been found to communicate with neurons and regulate blood flow. However, their computational functions are still not well understood. In a new study published in the Proceedings of the National Academy of Sciences, researchers explored the computational role of astrocytes and developed a mathematical model that demonstrates how they could be used alongside neurons to create a biologically plausible transformer.

This research not only sheds light on the workings of the human brain but also provides insights that could enhance the understanding of why transformers are so successful in various complex tasks in artificial intelligence. By bridging the gap between neuroscience and AI, this study holds promising prospects for future research.

From Biological Impossibility to Plausible Solution

Astrocytes may hold the key to understanding the self-attention mechanism in transformers. While neurons communicate through synaptic junctions, astrocytes form tripartite synapses with neurons, allowing them to collect neurotransmitters and hold and integrate information over longer time scales. This makes them ideal for performing the attention operation inside transformers.

Building a Neuron-Astrocyte Network

The researchers developed a mathematical model of a neuron-astrocyte network that emulates the self-attention process in transformers. By combining the core mathematics of transformers with biophysical models of astrocytes and neurons, they created an equation that describes a transformer’s self-attention. Numerical simulations confirmed the match between the simulated neuron-astrocyte network and transformer models’ responses to prompts.

According to Ksenia V. Kastaneka, an assistant professor of neurobiology at Harvard Medical School, this study uncovers the immense potential of astrocytes and opens up new possibilities for understanding intelligent behavior in the brain. The researchers now aim to compare their model’s predictions with biological experiments, further refining their hypothesis.

Additionally, the study suggests that astrocytes may play a role in long-term memory, as the network needs to store information for future use. Further research can explore this idea and delve deeper into the unique contributions of astrocytes in cognition and behavior.

This groundbreaking research, supported by the BrightFocus Foundation and the National Institute of Health, lays the foundation for future collaborations between computational neuroscience and glial cells, particularly astrocytes.